The AI Showdown: Claude vs Gemini vs ChatGPT Building a Tower Defense Game

Three leading AI models. One identical prompt. Three completely different implementations. See how Claude Opus 4.5, Gemini 3, and ChatGPT 5.2 each tackled the challenge of building a fully-playable tower defense game.

The Experiment

What happens when you give three of the world's most advanced AI models the exact same prompt with zero additional guidance?

I wanted to find out. So I gave Claude Opus 4.5 (Extended Think Mode), Gemini 3 (Think Mode), and ChatGPT 5.2 (Think Mode) an identical, detailed prompt to build a complete tower defense game in a single HTML file.

No follow-ups. No corrections. No hints. Just one comprehensive prompt and their best attempt at implementation.

Why I Did This

As a full-stack developer who increasingly uses AI to prototype, debug, and accelerate builds, I wanted to understand how different models actually behave when faced with a real, multi-system coding task—without hand-holding. This experiment mirrors how I use AI in production: one clear spec, then execution.

This isn't academic curiosity. Understanding which AI excels at what helps me choose the right tool for each phase of development—whether I need creative problem-solving, systematic implementation, or polished execution.

The Challenge: A Detailed Tower Defense Prompt

Each AI received an extensive prompt covering:

- Game Mechanics: 10 rounds, enemy paths, win/lose conditions, economy system

- Tower Types: Archer, Mage, and Cannon towers with specific stats and upgrade paths

- Tower Placement: Click-to-place system with range indicators and validation

- Enemy Types: Goblins, Trolls, and Bosses with unique characteristics

- Visual Requirements: Dark Fantasy/Steampunk aesthetic with detailed graphics

- Technical Constraints: Single HTML file, no external dependencies, fully playable

The prompt was comprehensive—over 1,500 words detailing game mechanics, visual styling, tower placement requirements, enemy waves, and success criteria. It included tables, specifications, and critical implementation details.

The Complete Prompt:

Below is the exact prompt given to all three AI models. Scroll through to see the full specification:

Create a complete tower defense game in a single HTML file with embedded CSS and JavaScript. The game should be fully playable and visually polished.

- 10 rounds total with increasing difficulty

- Enemy path: Enemies follow a predetermined path from start to finish

- Win condition: Survive all 10 rounds

- Lose condition: 20 enemies reach the end (player base health = 20)

- Starting gold: 200

- Gold per kill: Goblin = 10g, Troll = 25g, Boss = 100g

- Bonus gold: 50g at the end of each round survived

Players can build and upgrade three tower types:

| Tower Type | Base Cost | Upgrade Cost | Damage | Range | Attack Speed | Special |

|---|---|---|---|---|---|---|

| Archer Tower | 50g | 30g | 10 → 20 | Medium | Fast | Fires arrows |

| Mage Tower | 100g | 50g | 25 → 50 | Long | Slow | Magical projectiles, area splash damage |

| Cannon Tower | 150g | 75g | 40 → 80 | Short | Very Slow | Explosive projectiles, high single-target damage |

CRITICAL IMPLEMENTATION REQUIREMENTS:

- Tower placement MUST work by clicking directly on the game grid/canvas

- First, player clicks a tower type button in the shop to SELECT it

- Then, player clicks on a valid grass tile on the game board to PLACE the tower

- Show a preview/ghost image of the tower following the mouse cursor after selection

- Display the range circle preview while hovering over valid placement locations

- Only allow placement on grass tiles (NOT on the path, NOT on existing towers)

- Deduct gold immediately upon successful placement

- After placing, automatically deselect so player must click shop again for next tower

- Towers can be clicked after placement to show upgrade/sell options

- Towers can be upgraded once (Level 1 → Level 2)

- Towers can be sold for 50% of total investment

- Show range indicator circle when hovering during placement and when tower is selected

Enemy Stats:

| Enemy | HP | Speed | Gold Value |

|---|---|---|---|

| Goblin | 50 | Fast | 10g |

| Troll | 150 | Slow | 25g |

| Boss | 500 | Very Slow | 100g |

Wave Progression (10 rounds):

- Rounds 1-3: Goblins only (10, 15, 20 enemies)

- Rounds 4-6: Goblins + Trolls mixed (15, 20, 25 enemies)

- Rounds 7-9: Heavy mix of Goblins and Trolls (25, 30, 35 enemies)

- Round 10: BOSS round - 5 Bosses + 20 Goblins

Enemies spawn with 2-second intervals between each enemy.

Dark Fantasy / Steampunk with an isometric pixel art aesthetic, highly detailed and atmospheric, blending medieval fantasy with industrial Victorian elements.

- Isometric/3D-looking pixel art style with depth and detail

- Hexagonal grid system with electric blue glowing hex outlines

- Highly detailed tower structures with multiple stories, flags, glowing elements

- Rich environmental details and atmospheric effects

- Dramatic lighting with glowing magical elements

- Ornate UI frames with brass/copper borders, rivets, and gears

- Single HTML file with embedded CSS and JavaScript

- No external dependencies (or use CDN if necessary)

- Must work by simply opening the HTML file in a browser

- Clean, organized code with comments

- Efficient game loop using requestAnimationFrame

- Proper collision detection and coordinate mapping

- Responsive design that scales to different screen sizes

The game should be:

- ✅ Fully playable from start to finish (all 10 rounds)

- ✅ Visually stunning matching the Dark Fantasy/Steampunk aesthetic

- ✅ Tower placement works perfectly - clicking on grid successfully places towers

- ✅ Balanced and challenging but winnable with good strategy

- ✅ Smooth animations and responsive controls

- ✅ Bug-free with no game-breaking issues

- ✅ Polished UI with ornate steampunk styling

Scroll to view the complete prompt specification

The Competitors

Claude Opus 4.5

Extended Think Mode

Anthropic's flagship model with extended reasoning capabilities, designed for complex coding tasks and deep problem-solving.

Gemini 3

Think Mode

Google's advanced multimodal AI with enhanced reasoning, optimized for technical tasks and creative problem-solving.

ChatGPT 5.2

Think Mode

OpenAI's latest model with advanced reasoning capabilities, built for complex coding and systematic implementation.

How I Evaluated Each Implementation

I didn't just run the games—I evaluated them as a senior developer would evaluate a junior's pull request. Here's what I looked for:

- Code Readability & Maintainability: Is the code organized into logical sections? Can I quickly locate game state, rendering logic, and input handling?

- Extensibility: How easy would it be to add a new tower type or enemy? Is the architecture rigid or flexible?

- Bug Surface Area: How well does it handle edge cases like invalid tower placement, boundary conditions, or rapid user input?

- UI/UX Feedback: Does the player always understand what's happening? Are errors communicated clearly?

- Production-Readiness: Could this ship as-is, or would it need significant refactoring?

These aren't academic criteria—this is how I actually evaluate AI-generated code before integrating it into real projects.

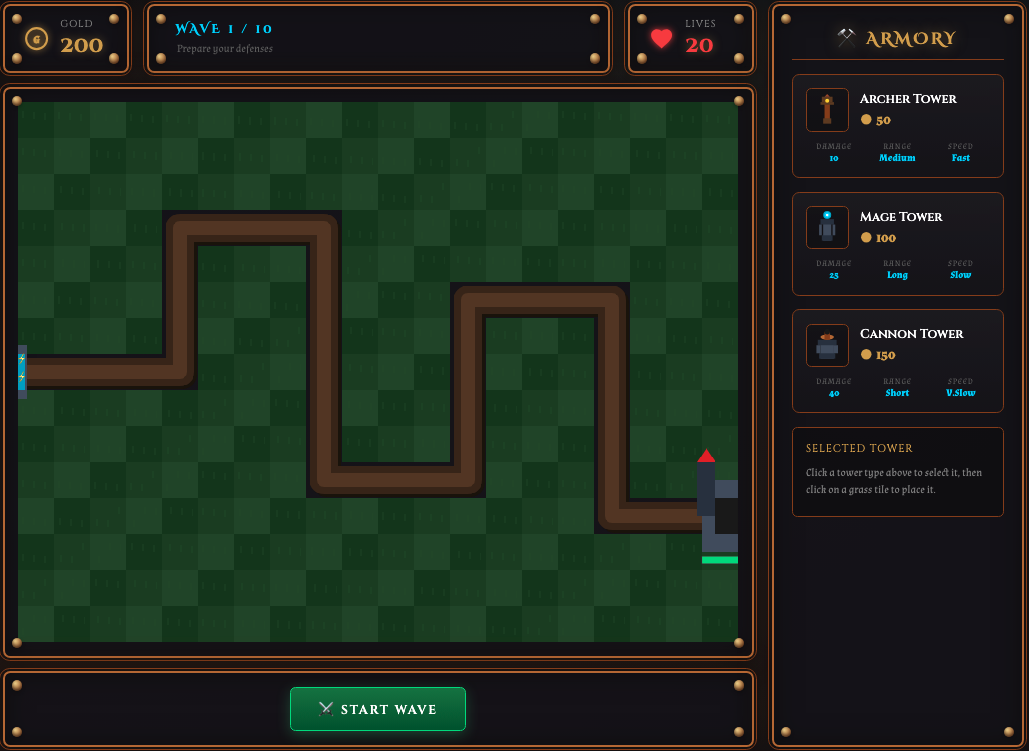

Claude Opus 4.5 Implementation

Extended Think Mode enabled deep planning and polished execution

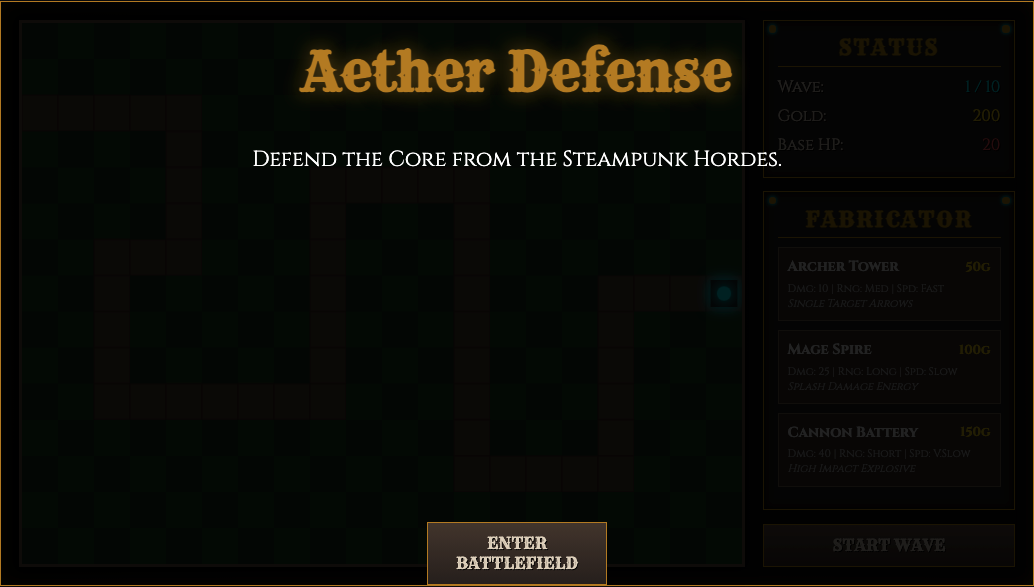

Gemini 3 Implementation

Think mode facilitated creative visual interpretation

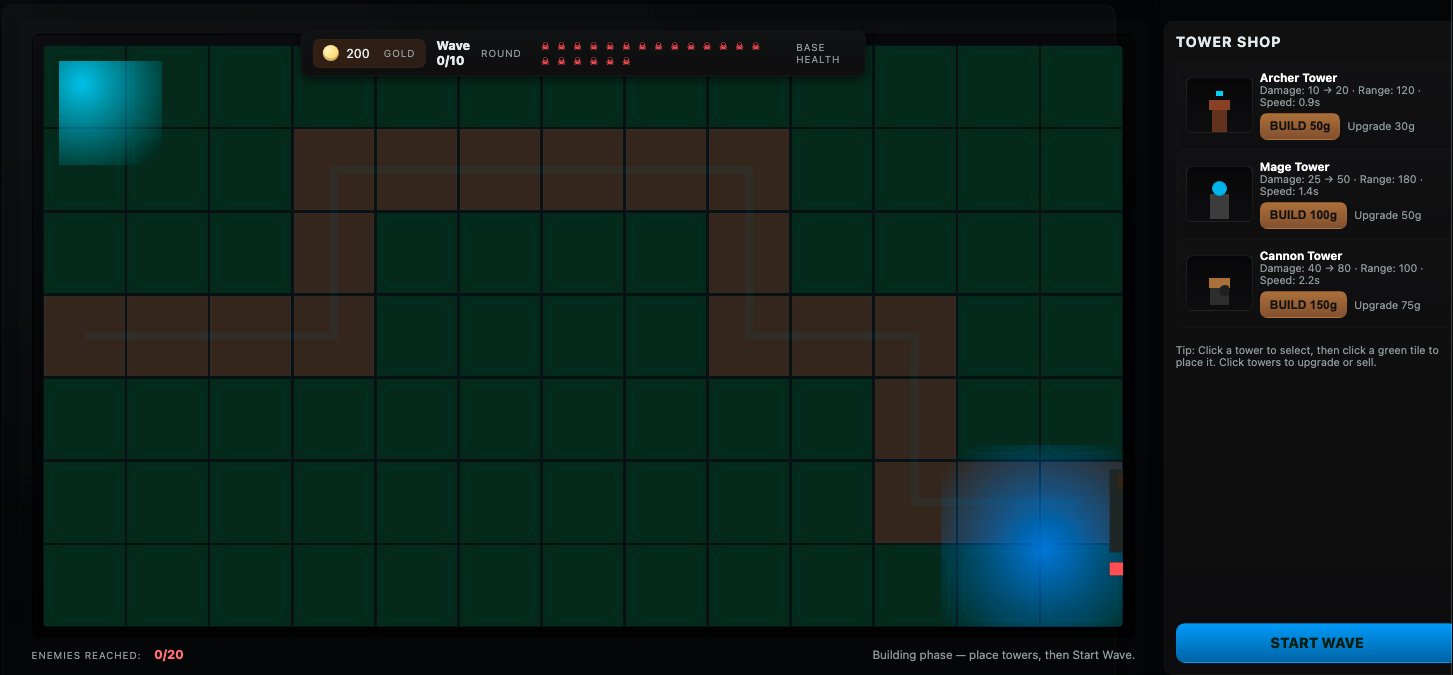

ChatGPT 5.2 Implementation

Think mode enabled systematic approach to game logic

Key Observations

What All Three Got Right

- Functional Game Loop: All three created playable games with working tower placement, enemy spawning, and win/lose conditions

- Single File Implementation: Each delivered on the technical requirement of a standalone HTML file

- Visual Presentation: All attempted to create engaging visuals matching the prompt's aesthetic requirements

- Core Mechanics: Tower upgrades, enemy waves, and resource management were implemented across all three

Where They Differed

- Code Organization: Claude showed more modular structure, Gemini favored compact patterns, ChatGPT took a systematic approach

- Visual Styling: Each interpreted "Dark Fantasy/Steampunk" differently—some prioritized detail, others prioritized clarity

- User Experience: Tower placement, UI feedback, and controls varied significantly between implementations

- Edge Cases: Handling of boundary conditions and error states showed different levels of defensive programming

The Think Mode Advantage

All three models used their respective "think" modes, which allowed them to:

- Plan architecture before writing code

- Consider edge cases and game balance

- Debug logic internally before outputting

- Produce more complete initial implementations

Without think modes enabled, I've seen similar prompts result in incomplete loops, broken economies, or placeholder visuals. In this experiment, think modes consistently produced finished systems rather than prototypes—a critical difference when you need production-ready code, not just demos.

What This Experiment Reveals About AI-Assisted Development

1. Prompt Quality Still Matters Immensely

A detailed, well-structured prompt with clear success criteria produced significantly better results than vague requests. Specifying exact mechanics, visual requirements, and technical constraints led to more complete implementations.

2. Each Model Has Distinct Strengths

Rather than one being "better," each model showed unique capabilities. Some excelled at visual interpretation, others at code structure, and others at systematic implementation. The "best" choice depends on your specific needs.

3. Think Modes Deliver Measurable Value

Enabling extended reasoning modes resulted in more complete implementations, better error handling, and fewer obvious bugs. The extra planning time produced more production-ready code.

4. AI Can Handle Complex Multi-System Tasks

A tower defense game requires coordinating graphics, game state, collision detection, user input, AI pathfinding, and resource management. All three models successfully integrated these systems.

What This Would Need for Production Use

These implementations are impressive demos, but shipping them to real users would require additional work. Here's what I'd change:

- Modularize the Code: Split logic into separate modules (even if still bundled at build time) for game state, rendering, input handling, and enemy AI

- Add Tests: Basic unit tests for wave logic, placement validation, and damage calculations would catch regressions

- Performance Profiling: Later rounds with many towers and enemies need optimization—measure frame rates and identify bottlenecks

- Accessibility Improvements: Keyboard navigation, better color contrast, screen reader support, and reduced motion options

- Mobile Optimization: Touch controls need refinement, and performance on mobile devices requires testing and tuning

- Save/Load State: Players expect to resume progress, especially in longer games

This isn't a criticism of the AI implementations—it's the reality of software development. Getting to 80% is fast with AI. That last 20% still requires engineering judgment, testing, and iteration.

Conclusion: The Future of AI-Assisted Development

This experiment demonstrates that modern AI models have crossed a significant threshold: they can take complex requirements and produce functional, integrated systems in a single pass.

More importantly, it highlights that the future isn't about one AI replacing another—it's about understanding each model's characteristics and choosing the right tool for the task.

Whether you're building games, web applications, or complex systems, AI-assisted development is no longer experimental—it's practical, powerful, and ready for production use.

For me, AI isn't a replacement for engineering skill—it's a force multiplier. Knowing how to write precise specs, evaluate output critically, and decide when to refactor or rebuild is now a core development skill. This experiment reflects how I approach modern software development: deliberate, analytical, and tool-agnostic.

The best developers won't be those who refuse AI or blindly accept its output. They'll be the ones who know how to leverage it strategically while maintaining high engineering standards.